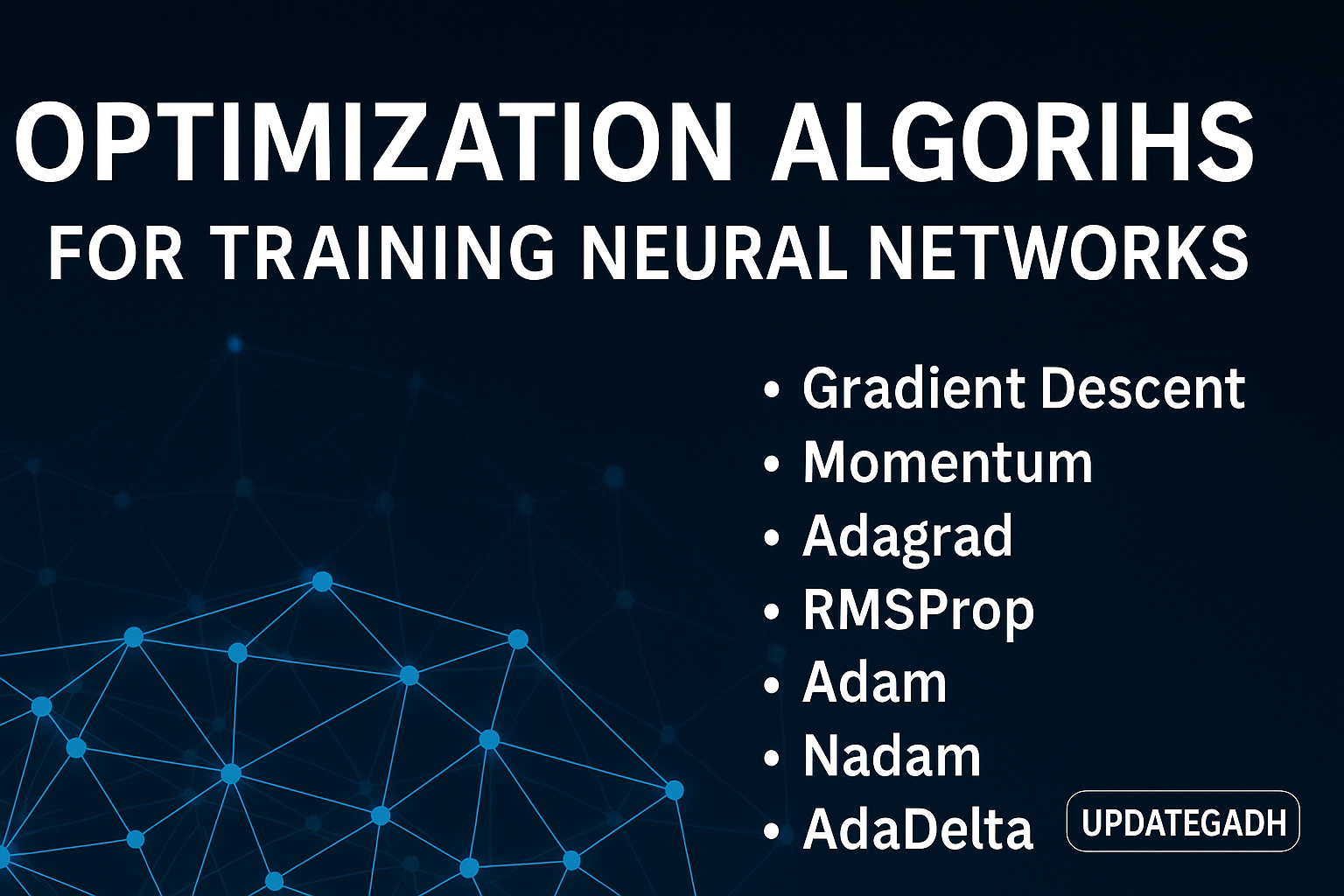

Optimization Algorithms for Training Neural Networks

Optimization Algorithms

Neural networks are powerful tools at the core of modern artificial intelligence. However, their true potential is only unlocked through effective training. Training a neural network involves fine-tuning internal parameters—such as weights and biases—so that the model can learn from data and make accurate predictions. This is where optimization algorithms play a pivotal role. They guide the model towards its best possible configuration by minimizing the errors in prediction.

At the center of this process lies the loss function—a mathematical representation of how far the network’s predictions deviate from the actual results. The goal of optimization is to minimize this loss, which in turn improves the overall performance and accuracy of the network.

Machine Learning Tutorial:-Click Here

Data Science Tutorial:-Click Here

Complete Advance AI topics:-CLICK HERE

DBMS Tutorial:-CLICK HERE

Gradient Descent: The Foundation of Optimization

Gradient Descent (GD) is one of the most fundamental optimization algorithms in machine learning. It is the starting point for understanding how neural networks are trained.

How Gradient Descent Works:

Imagine the loss function as a landscape of hills and valleys. The valleys represent points of low error (better performance), and the hills represent high error (worse performance). Gradient descent attempts to “roll down” the hills to find the lowest point—the global minimum.

- Loss Function: A mathematical expression that quantifies how far off the network’s predictions are from the actual values.

- Gradient: A vector that points in the direction of the steepest increase in the loss. By moving in the opposite direction of the gradient, we head toward minimizing the loss.

- Parameter Updates: The algorithm updates the weights and biases of the network step by step, using the gradient to determine the direction.

- Iteration: This process is repeated multiple times—each time adjusting the parameters slightly—until the model reaches an optimal or near-optimal state.

Strengths of Gradient Descent:

- Simplicity: The concept of moving in the direction of decreasing loss is easy to understand and implement.

- Versatility: It’s widely used across various domains beyond just deep learning.

Weaknesses of Gradient Descent:

- Slow Convergence: Especially in complex models, GD can take a large number of steps to reach a minimum.

- Local Minima: There’s a chance the model gets stuck in a local minimum, which is not the best solution globally.

Despite its limitations, gradient descent remains the backbone of many modern optimizers. More advanced algorithms—like Adam and RMSprop—are built on its fundamental concepts.

Stochastic Gradient Descent: A Faster Alternative

While standard gradient descent can be effective, it becomes computationally expensive with large datasets. Stochastic Gradient Descent (SGD) addresses this issue by modifying how gradients are calculated.

Key Differences from Gradient Descent:

- Batch Size: GD computes gradients over the entire dataset, while SGD uses only a single example or a small batch at each step.

- Computation: Smaller batch sizes make each update much faster, which is highly beneficial for large-scale datasets.

Advantages of SGD:

- Faster Training: Less data per update means quicker training cycles.

- Better at Escaping Local Minima: The randomness introduced by smaller batches helps the model avoid getting stuck in suboptimal solutions.

Disadvantages of SGD:

- Noisy Updates: Since each update is based on limited data, the learning path can be unstable.

- Hyperparameter Sensitivity: Selecting an appropriate batch size is critical and can significantly impact the performance.

Complete Python Course with Advance topics:-Click Here

SQL Tutorial :–Click Here

Download New Real Time Projects :-Click here

Choosing the Right Optimizer

When training a neural network, choosing the right optimization algorithm depends on your specific needs:

- If you’re working with smaller datasets or value stability, Gradient Descent might be sufficient.

- For large-scale problems where speed is crucial, Stochastic Gradient Descent can be a better fit—especially when combined with techniques like momentum or learning rate scheduling.

Ultimately, both GD and SGD form the core of many advanced optimization strategies in deep learning. Understanding them is essential before moving on to more complex optimizers like Adam, Adagrad, or RMSprop.

UpdateGadh is your go-to resource for in-depth technical insights on machine learning, neural networks, and AI technologies. Stay tuned for more tutorials and updates in the world of artificial intelligence.

optimization algorithms in deep learning

types of optimizer in neural network

challenges in neural network optimization in deep learning

an efficient optimization technique for training deep neural networks

neural network based optimization in research methodology

optimization in neural network

neural network optimization python

which statements about deep learning models using neural networks are true

optimization algorithms for training neural networks pdf

optimization algorithms for training neural networks python

optimization algorithms for training neural networks in deep learning

optimization algorithms for training neural networks example

optimization algorithms for training neural networks geeksforgeek

optimization algorithms in machine learning

optimization algorithms in deep learning

optimization algorithms book

optimization algorithms pdf

optimization algorithms in neural network

optimization algorithms examples

optimization algorithms python

list of optimization algorithms for machine learning

genetic algorithm

data science optimization algorithms

Post Comment