Normalization in DBMS – A Complete Guide | Updategadh

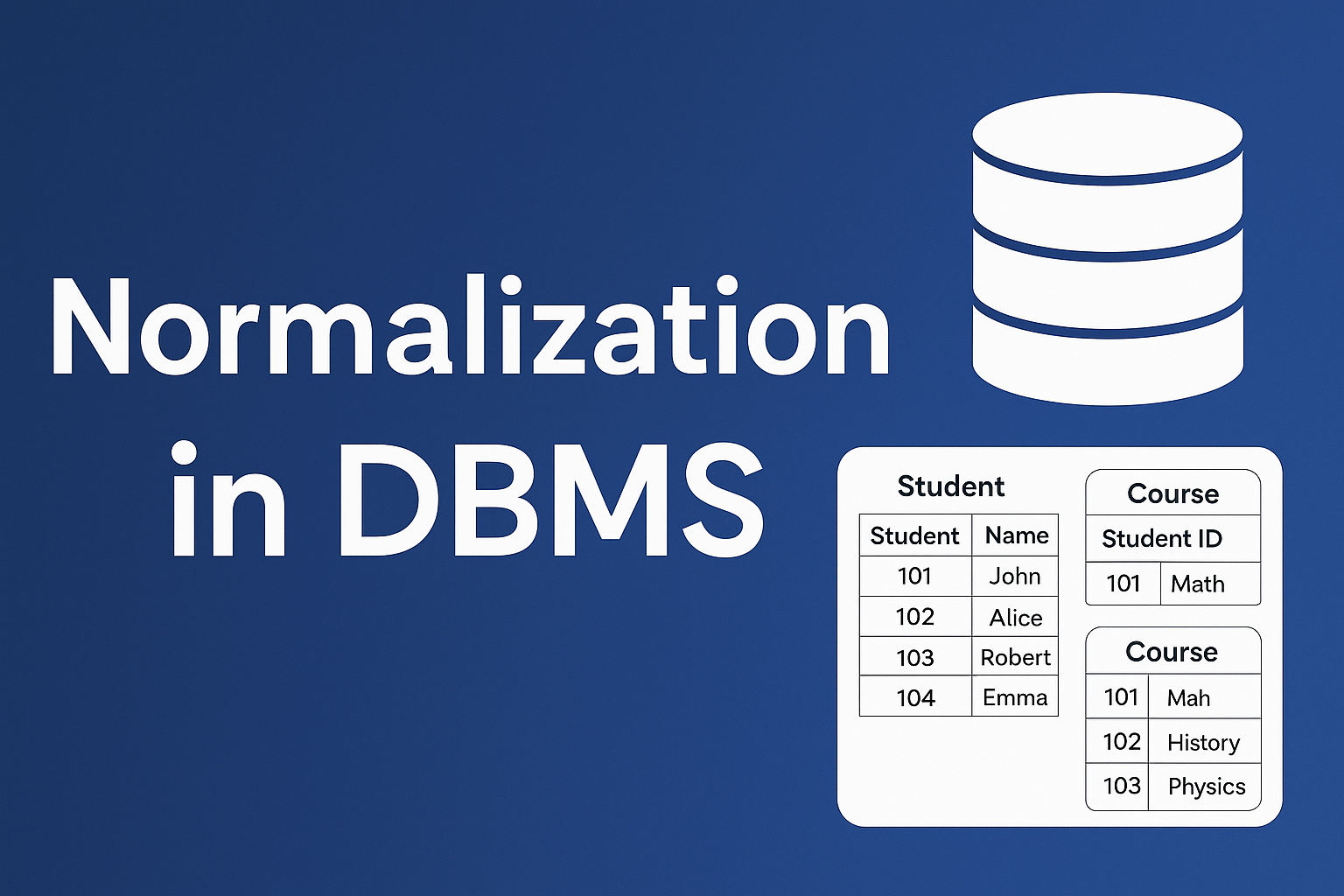

Normalization in DBMS

In the world of database management systems (DBMS), structuring data efficiently is critical for ensuring consistency, minimizing redundancy, and optimizing storage. When large databases are defined as a single relation (or table), it often results in data duplication. This repetition can cause several problems, including:

- Unnecessarily large and unmanageable tables

- Increased difficulty in updating or maintaining data

- Wastage of disk space and computing resources

- A higher risk of errors and data inconsistencies

To address these issues, database designers use Normalization, a systematic process of decomposing large, complex relations into smaller, well-structured ones. In this post from Updategadh, we’ll explore what normalization is, why it’s essential, and how it improves the design and efficiency of a relational database.

Machine Learning Tutorial:-Click Here

Data Science Tutorial:-Click Here

Complete Advance AI topics:- CLICK HERE

Deep Learning Tutorial:- Click Here

What is Normalization?

Normalization is the process of organizing data in a database to reduce redundancy and avoid undesirable characteristics such as insertion, update, and deletion anomalies. To preserve data integrity, it entails breaking up big tables into smaller ones and establishing connections between them.

The goal of normalization is not just to eliminate duplicate data, but also to ensure that the database is free from anomalies that may arise during operations like insertion, deletion, and updating.

Why Do We Need Normalization?

Normalization helps in:

- Removing data anomalies

- Ensuring data consistency

- Improving the efficiency of queries

- Providing a logical structure to data

- Supporting a scalable and flexible database design

Without normalization, databases become prone to inconsistencies, unnecessary duplication, and maintenance difficulties—especially as the database grows in size and complexity.

Types of Data Anomalies

Normalization specifically addresses three kinds of anomalies:

1. Insertion Anomaly

Occurs when certain data cannot be inserted into the database without the presence of other data.

Example: You can’t add a student to a course database unless the student is already enrolled in a course.

2. Deletion Anomaly

Happens when the deletion of one piece of data inadvertently removes related valuable information.

Example: Deleting a course might also delete all records of enrolled students.

3. Update Anomaly

Occurs when a single update requires changes in multiple rows.

Example: Updating a student’s phone number across several rows is error-prone and inefficient.

Types of Normal Forms

Normalization progresses through various stages known as Normal Forms (NFs). Each form builds upon the previous one by introducing stricter rules to remove redundancy and improve structure.

| Normal Form | Description |

|---|---|

| 1NF (First Normal Form) | guarantees that the values of every property are atomic, or indivisible. |

| 2NF (Second Normal Form) | When a table is in 1NF and every non-key attribute depends entirely on the primary key, this is the result. |

| 3NF (Third Normal Form) | If there are no transitive dependencies, a table in 2NF is in 3NF. |

| BCNF (Boyce-Codd Normal Form) | 3NF in a stronger form. Each determinant needs to be a potential key. |

| 4NF (Fourth Normal Form) | If a table has no multi-valued dependents and is in BCNF, it is in 4NF. |

| 5NF (Fifth Normal Form) | If a relation is in 4NF and has no join dependencies, guaranteeing lossless joins, it is in 5NF. |

Steps to Normalize a Database

Normalization typically follows this sequence:

- Start with unnormalized data

- Apply 1NF – Remove repeating groups and ensure atomic values

- Apply 2NF – Remove partial dependencies

- Apply 3NF – Remove transitive dependencies

- Optionally apply BCNF, 4NF, and 5NF depending on the complexity and business requirements

Each step helps eliminate specific types of redundancy and anomalies, resulting in a cleaner, more efficient schema.

Advantages of Normalization

- Reduces data redundancy

- Ensures data consistency and accuracy

- Improves database efficiency and storage optimization

- Enforces data integrity through well-defined relationships

- Provides a logical and scalable database structure

Disadvantages of Normalization

- Requires a deep understanding of business requirements before implementation

- Can negatively impact performance in highly normalized (e.g., 4NF, 5NF) databases due to excessive joins

- The process is time-consuming and can be complex

- Poorly executed normalization may result in inefficient or confusing data models

Complete Python Course with Advance topics:-Click Here

SQL Tutorial :-Click Here

Download New Real Time Projects :-Click here

Conclusion

A fundamental idea in database architecture, normalisation provides an organised method of data organisation and redundancy removal. By understanding and applying normal forms, database designers can create efficient, scalable, and error-resistant systems. However, like many design decisions, normalization requires balance—over-normalization can hinder performance, while under-normalization can lead to data anomalies.

At Updategadh, we believe that a well-normalized database is not just good practice—it’s a necessity for robust and maintainable systems.

Stay tuned for more deep dives into database design and optimization!

normalization in dbms with examples

normalization in dbms with examples pdf

normalization in dbms ppt

types of normalization in dbms

denormalization in dbms

normalization in dbms javatpoint

normalization in dbms gfg

normalization in dbms 1nf 2nf 3nf

Post Comment