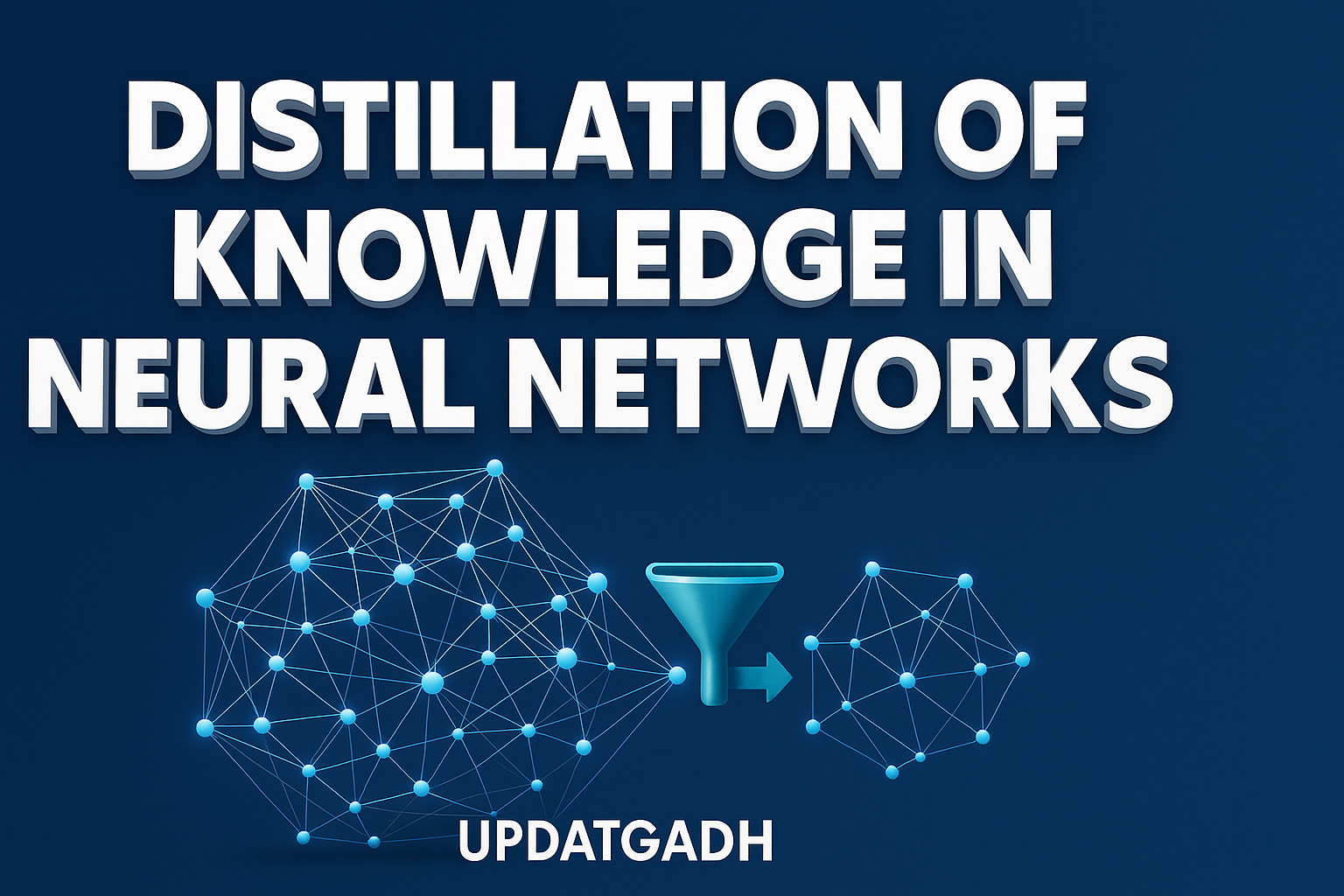

Distillation of Knowledge in Neural Networks

Distillation of Knowledge

In recent years, artificial neural networks have emerged as a groundbreaking technology, delivering exceptional results in complex tasks such as image recognition, natural language processing, and speech synthesis. However, these achievements often come at the cost of increased model complexity. Large-scale neural networks typically demand significant computational power and memory, which can limit their deployment in resource-constrained environments.

To overcome these challenges, Knowledge Distillation (KD) has gained widespread attention.This method preserves the majority of the teacher’s performance while allowing knowledge to be transferred from a large, complex model (the teacher) to a smaller, more effective model (the pupil). This article explores the core concepts, mechanisms, and benefits of knowledge distillation, providing a clear and professional overview of this powerful model compression method.

Machine Learning Tutorial:-Click Here

Data Science Tutorial:-Click Here

Complete Advance AI topics:-CLICK HERE

DBMS Tutorial:-CLICK HERE

What is Knowledge Distillation?

In the machine learning process known as “Knowledge Distillation,” a large, pre-trained neural network model that serves as a teacher imparts its expertise to a smaller, lighter student model. The goal is to compress the capabilities of the teacher into the student so that the latter achieves similar accuracy but with significantly reduced computational and memory requirements.

This method is particularly valuable for deploying machine learning models on devices with limited resources such as mobile phones, embedded systems, or edge computing platforms.

Key Concepts in Knowledge Distillation

Teacher Model

- Definition: A large, high-capacity neural network trained on a task with high accuracy.

- Role: Provides guidance and knowledge to the student model.

- Characteristics: High complexity, many parameters, computationally expensive to train and deploy.

Student Model

- Definition: A smaller, lightweight neural network designed to emulate the teacher’s performance.

- Role: Learns from the teacher’s outputs and, optionally, from the original ground-truth labels.

- Characteristics: Faster inference, fewer parameters, and resource-constrained environment optimisation.

Soft Targets

- Definition: Probability distributions over classes produced by the teacher model, instead of hard labels.

- Purpose:By documenting the links between classes, you may provide richer information.

- Example: For an image labeled “dog,” the teacher might output probabilities like 85% dog, 10% wolf, and 5% fox, helping the student understand inter-class similarities.

Temperature (T)

- Definition: A hyperparameter applied in the teacher’s softmax function to control the softness of the probability distribution.

- Purpose: Higher temperatures smooth out probabilities, making smaller differences more significant and helping the student model capture subtle patterns.

Loss Function

- Components:

- Distillation Loss: Encourages the student to match the teacher’s softened outputs, often calculated using Kullback-Leibler (KL) divergence or mean squared error.

- Supervised Loss: uses cross-entropy loss to train the pupil on the original hard labels.

- Purpose: Combining these losses ensures the student benefits from both the teacher’s knowledge and the true labels.

Knowledge Transfer Mechanisms

- Output-based Transfer: uses the soft targets set by the teacher as training cues.

- Feature-based Transfer: Uses intermediate layer representations from the teacher as guidance.

Flexibility in Model Architecture

The student model architecture can differ from the teacher’s. For example, a convolutional neural network (CNN) teacher could distill knowledge into a lightweight MobileNet student, or a large transformer-based model like BERT could be compressed into DistilBERT.

Trade-Off Parameters

- Temperature (T): Controls output softness.

- Weighting factor (α): Balances the distillation loss and supervised loss during student training.

Tuning these hyperparameters is crucial for optimal performance.

How Does Knowledge Distillation Work?

Knowledge Distillation is essentially a two-step process where the teacher model’s knowledge is encoded in soft targets, which the student model then learns to replicate.

Step-by-Step Process:

- Train the Teacher Model:

A large neural network is trained on a dataset using traditional supervised learning, achieving high accuracy but requiring significant computational resources. - Generate Soft Targets:

A softmax function, frequently with a temperature parameter to smooth probabilities, is used by the teacher to process input samples and provide softened class probability distributions. - Train the Student Model:

The student learns by minimizing a combined loss function that includes:- Distillation loss (between student and teacher soft targets)

- Supervised loss (between student predictions and ground-truth labels)

- Optimize the Student:

Using optimization algorithms like gradient descent, the student adjusts its parameters to best match the teacher’s behavior while maintaining efficiency.

Why Does Knowledge Distillation Work?

- Information-Rich Supervision: Soft targets contain nuanced inter-class relationships that help the student generalize better than using hard labels alone.

- Capacity Matching: The student focuses on learning essential patterns distilled from the teacher, avoiding overfitting and unnecessary complexity.

- Regularization Effect: Teacher guidance acts as a regularizer, preventing the student from fitting noise or irrelevant features in the training data.

Illustrative Example

Imagine training a large ResNet-50 model on an image classification task with 10 classes. The teacher outputs soft targets with probabilities, such as 85% dog, 10% wolf, and 5% fox, for a given dog image. The student, a smaller MobileNet model, is trained to replicate these distributions alongside learning from the original labels. After training, the MobileNet student achieves comparable accuracy to the ResNet-50 teacher but with much faster inference and lower resource use.

Summary

- Pre-train a large teacher model with high accuracy.

- Use the teacher to generate soft targets for the dataset, possibly applying temperature smoothing.

- Train a smaller student model with a combined loss of distillation and supervised losses.

- Deploy the student model, which is lightweight, efficient, and maintains strong performance.

Complete Python Course with Advance topics:-Click Here

SQL Tutorial :–Click Here

Download New Real Time Projects :–Click here

Conclusion

Knowledge Distillation offers a practical solution to the challenge of deploying deep learning models in resource-limited environments. By transferring knowledge from a complex teacher to a simpler student, KD enables the creation of efficient AI systems without compromising accuracy. This approach plays a vital role in advancing sustainable and accessible AI technologies for real-world applications.

For more insightful articles and updates on machine learning techniques, visit UpdateGadh.

knowledge distillation

knowledge distillation: a survey

knowledge distillation paper

knowledge distillation-pytorch

deepseek knowledge distillation

knowledge distillation of large language models

knowledge distillation huggingface

model distillation machine learning

distillation of knowledge in neural networks pdf

distillation of knowledge in neural networks github

distillation of knowledge in neural networks example

Post Comment